Apropos

The makers of the Raspberry Pi have recently been announcing boot support for the Raspberry Pi 4. Although there was already boot support for earlier models, the Raspberry Pi 4 has an EEPROM on board in which we can upload PXE capable bootcode, making the use of the SDcard obsolete.

Previously, when an earlier model Raspberry Pi ran it’s boot cycle, the first action programmed to take was to take a look in the first FAT32 (boot) partition on the SD card, looking for bootcode.bin and cmdline.txt. These files are, firmware initialization routines and some kernel parameters. It initializes the hardware and subsequently, boots the linux kernel and specifies which root file system to mount.

Since the Pi 4, the bootcode.bin file is written in the onboard EEPROM of the Pi 4, making reading or writing to the SDcard obsolete. This contributes greatly to the stability and centralized management of the Raspberry Pi.

In this guide, we’ll be taking the following actions to prepare our proof of concept:

- install and configure a Freenas NAS virtual machine

- prepare the Raspberry Pi filesystem on the NFS share

- enable pxe and reconfigure the boot order on the Raspberry Pi

- configure the Pfsense DHCP server to support network booting

Step 1: install and configure a Freenas NAS virtual machine

Basic Freenas virtual machine installation:

NOTE: during virtual machine configuration, make sure to add a second disk. The first (OS) disk cannot be used to share files.

Create a storage pool:

In the Freenas Gui, navigate in the left pane to Storage, Pools, and add a new storage pool, selecting the second disk of the virtual machine. I called mine POOL1.

First we will enable the SSH service on the Freenas virtual machine, flip the running switch, click the check mark to start automatically, and configure the actions of the server.

Now that the SSH server is running on the NAS, we’ll be connecting with a putty client using the root username with the password

Next we’ll be creating a directory to contain all our working files for this project:

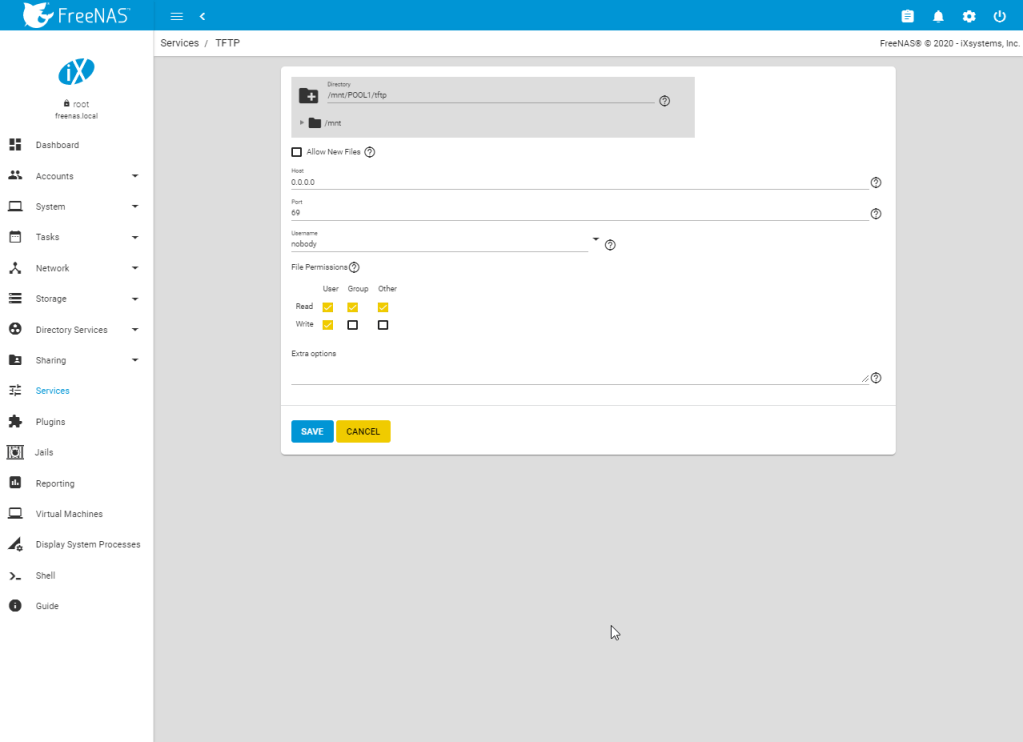

mkdir /mnt/POOL1/tftp

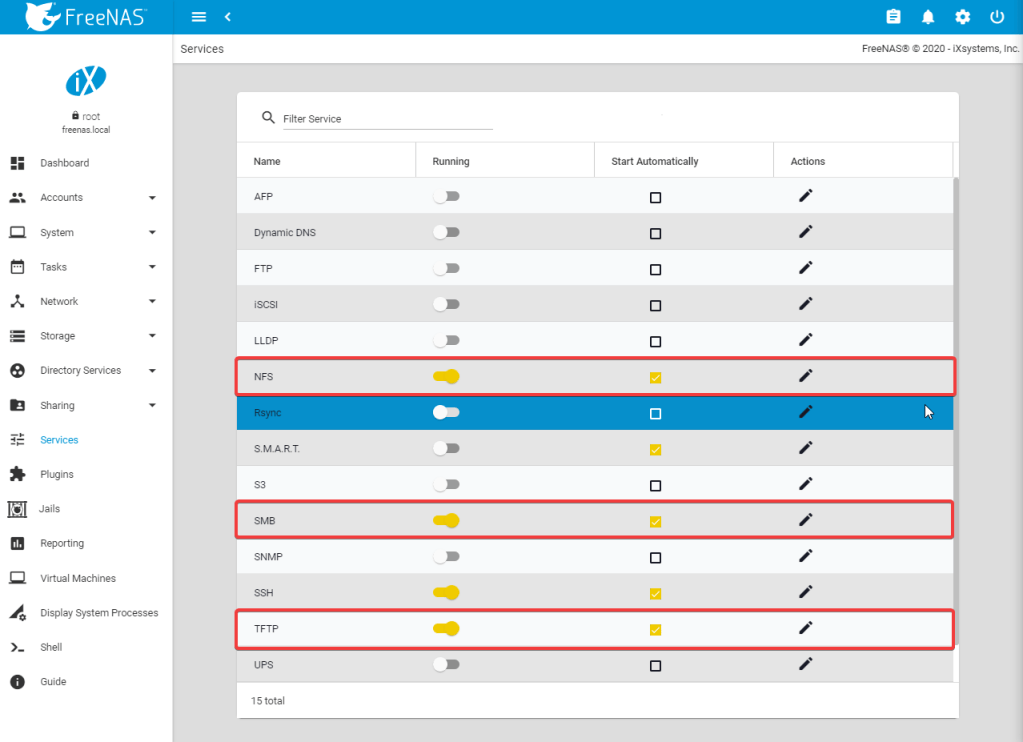

Next we will enable the NFS, SMB, and TFTP server on the freenas and configure their parameters:

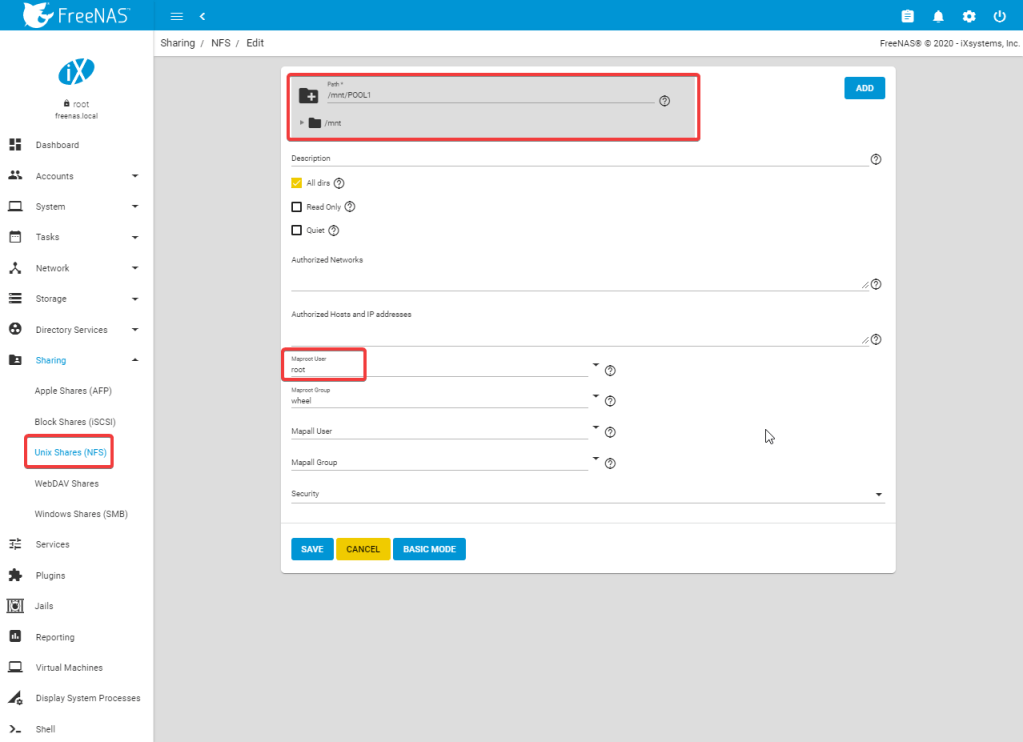

Next we need to configure an NFS share to host the Raspberry Pi’s file systems.

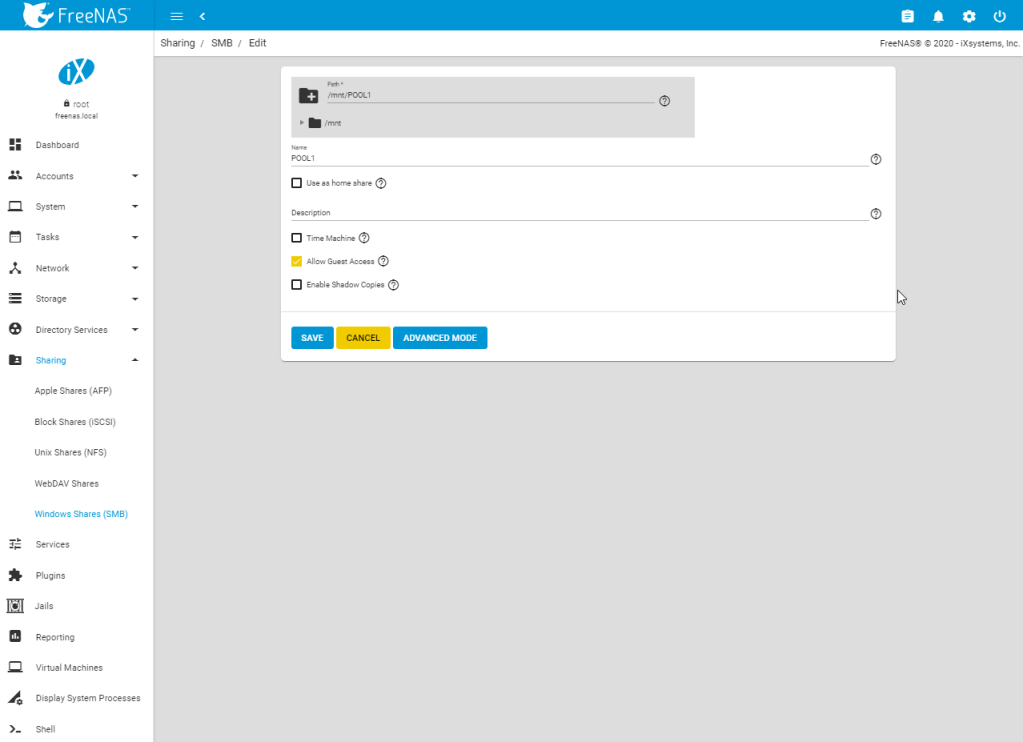

And for simplicity, we will share the directory over SMB so we can take a look with a windows station.

Step 2: prepare the Raspberry Pi filesystem on the NFS share

To prepare our NFS share with the needed files to boot our Raspberry Pi from, we will SSH into our Freenas box, download and extract the Raspbian OS image, mount the image and copy the boot and root files to a folder in our NFS share.

# download any of the Raspbian OS images at https://www.raspberrypi.org/downloads/raspbian/

wget https://downloads.raspberrypi.org/raspbian_full_latest

# unzip the compressed image

unzip 2020-02-13-raspbian-buster-full.zip

# create a loopback device for our image file

mdconfig -a -t vnode -u 0 -f ./2020-02-13-raspbian-buster.img

# create a folder to mount the boot partition on

mkdir /mnt/img1

# create a folder to mount the root partition on

mkdir /mnt/img2

# mount the fat partition of our image

mount_msdosfs /dev/md0s1 /mnt/img1

# mount the linux partition of our image

mount -t ext2fs -o ro /dev/md0s2 /mnt/img2

# create a directory to contain our files in the tftp server directory, name the directory with the serial number of your Raspberry Pi, it will search for it during boot

mkdir /mnt/POOL1/tftp/my-rpi-serial-number

# also create a rootfs directory to hold the root system files of our Raspberry Pi

mkdir /mnt/POOL1/tftp/my-rpi-serial-number/rootfs

# now copy the boot files into place

rsync -av /mnt/img1/ /mnt/POOL1/tftp/my-rpi-serial-number

# and also the root file system

rsync -av /mnt/img2/ /mnt/POOL1/tftp/my-rpi-serial-number/rootfs

# now that we have our files in place on the NFS server, we can unmount and unplug our image

# first unmount both partitions which we have mounted from our loop device

umount /mnt/img1

umount /mnt/img2

# now free up the loop device

mdconfig -du md0

Pi4 Bootloader Configuration

To facilitate network booting with the Raspberry Pi, we will tell the boot loader on the EEPROM to try network booting if no SDcard is found. We can do this by modifying the boot order in the bootloader configuration.

You can display the currently-active configuration using

vcgencmd bootloader_config

To change these bootloader configuration items, you need to extract the configuration segment, make changes, re-insert it, then reprogram the EEPROM with the new bootloader. The Raspberry Pi will need to be rebooted for changes to take effect.

# Extract the configuration file

cp /lib/firmware/raspberrypi/bootloader/stable/pieeprom-2020-01-17.bin pieeprom.bin

rpi-eeprom-config pieeprom.bin > bootconf.txt

# Edit the configuration using a text editor e.g. nano bootconf.txt

# Change boot order to 0x21, it will make the boot code search for bootcode.bin on SD card first and fallback to network boot

BOOT_ORDER=0x21

# The following option specifies that the Raspberry Pi will search for it's bootfile in a folder with the same name as the mac address of the Piô,

TFTP_PREFIX=2

# Save the new configuration and exit editor

# Apply the configuration change to the EEPROM image file

rpi-eeprom-config --out pieeprom-new.bin --config bootconf.txt pieeprom.bin

To update the bootloader EEPROM with the edited bootloader:

# Flash the bootloader EEPROM

# Run 'rpi-eeprom-update -h' for more information

sudo rpi-eeprom-update -d -f ./pieeprom-new.bin

sudo reboot

Check out the link of the Raspberry Pi website for all options: https://www.raspberrypi.org/documentation/hardware/raspberrypi/bcm2711_bootloader_config.md

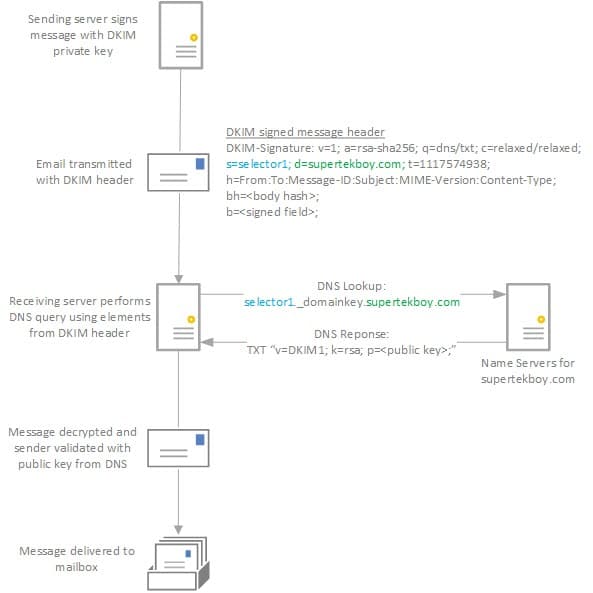

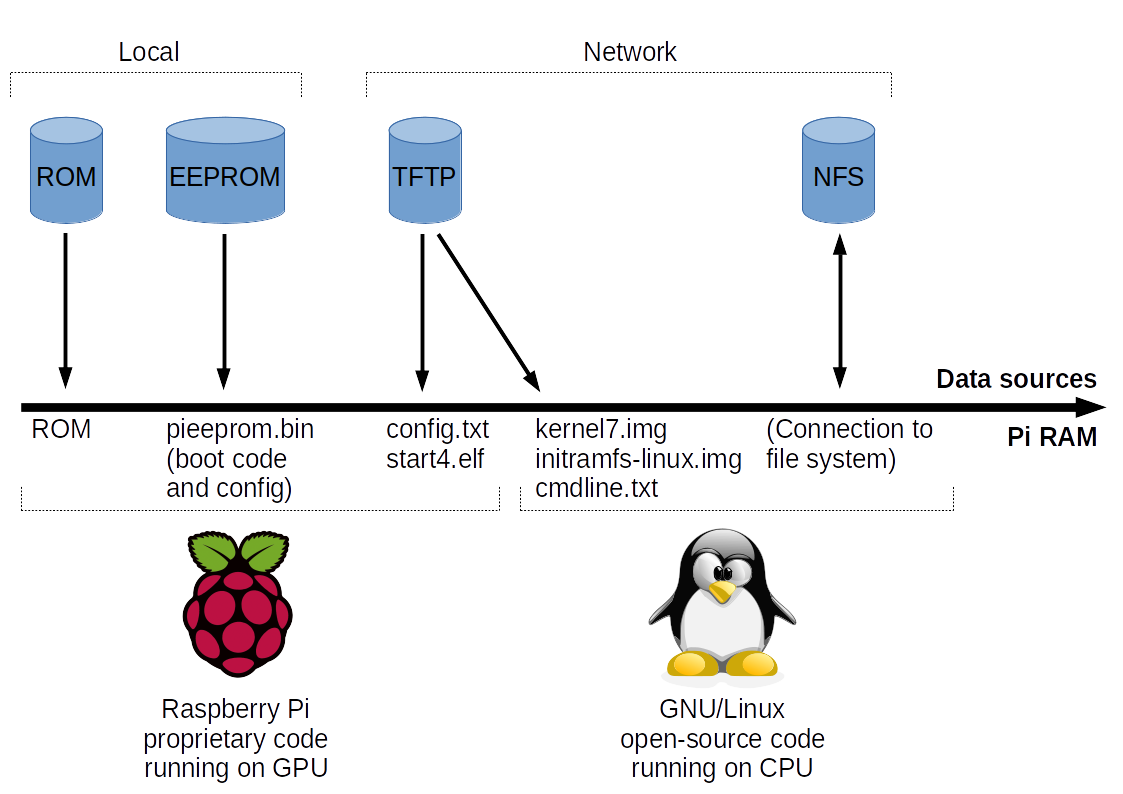

Once rebooted, and if no SD card is inserted, the Raspberry Pi will PXE boot. This process goes as follows:

- BCM2711 SoC powers up

- On board bootrom checks for bootloader recovery file (recovery.bin) on the SD card.

- If found, it executes it to flash the EEPROM and recovery.bin triggers a reset.

- Otherwise, the bootrom loads the main bootloader from the EEPROM.

- Bootloader checks it’s inbuilt BOOT_ORDER configuration item to determine what type of boot to do.

– SD Card

– Network

– USB mass storage

During the kernel boot process, the Raspberry Pi downloads the file cmdlines.txt. This file contains kernel boot parameters and a reference to an NFS server path which should be mounted as root filesystem.

dwcotg.lpm_enable=0 console=serial0,115200 console=tty1 elevator=deadline rootwait rw root=/dev/nfs nfsroot=192.168.20.118:/mnt/POOL1/tftp/7313c84f/rootfs,v3,tcp ip=dhcp

The /etc/fstab file, which is reponsible for mounting volumes during boot time, needs to contain a reference to the boot filesystem on the nfs server. The /boot folder needs to be correctly mounted because the rpi update process tries to access this folder for kernel updates during startup. Below is the contents of my /etc/fstab file:

proc /proc proc defaults 0 0

192.168.20.118:/mnt/POOL1/tftp/7313c84f /boot nfs defaults,proto=udp 0 0

Modifying the swap system

Since dphys-swapfile is not working correctly with nfs mounted shares, we will disable the swap.

# remove the software package which provides the dphys-swapfile service

sudo apt remove -y --purge dphys-swapfile

# remove the old swap file, if any

sudo rm /var/swap

Disabling the resize service

It makes no sense in extending the nfs partition, that’s not possible.

# disable the resize service so it will not give errors during boot

systemctl disable resize2fs_once

reboot

Update your Raspian OS

To finish the installation, make sure to update your Raspbian OS on your nfs mount.

apt update

apt dist-upgrade

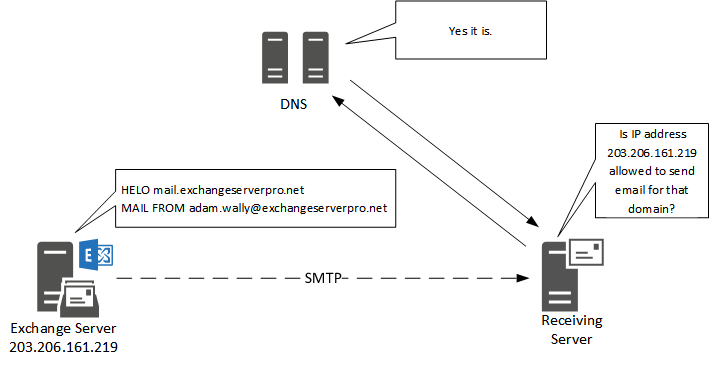

Step 4: configure the Pfsense DHCP server to support network booting

Below screenshot outlines the required configuration of the Pfsense DHCP server to allow the Raspberry Pi to boot of the network via DHCP/PXE.

# option 43 is set to the following hexadecimal value

06:01:03:0a:04:00:50:58:45:09:14:00:00:11:52:61:73:70:62:65:72:72:79:20:50:69:20:42:6f:6f:74:ff